Table of Contents

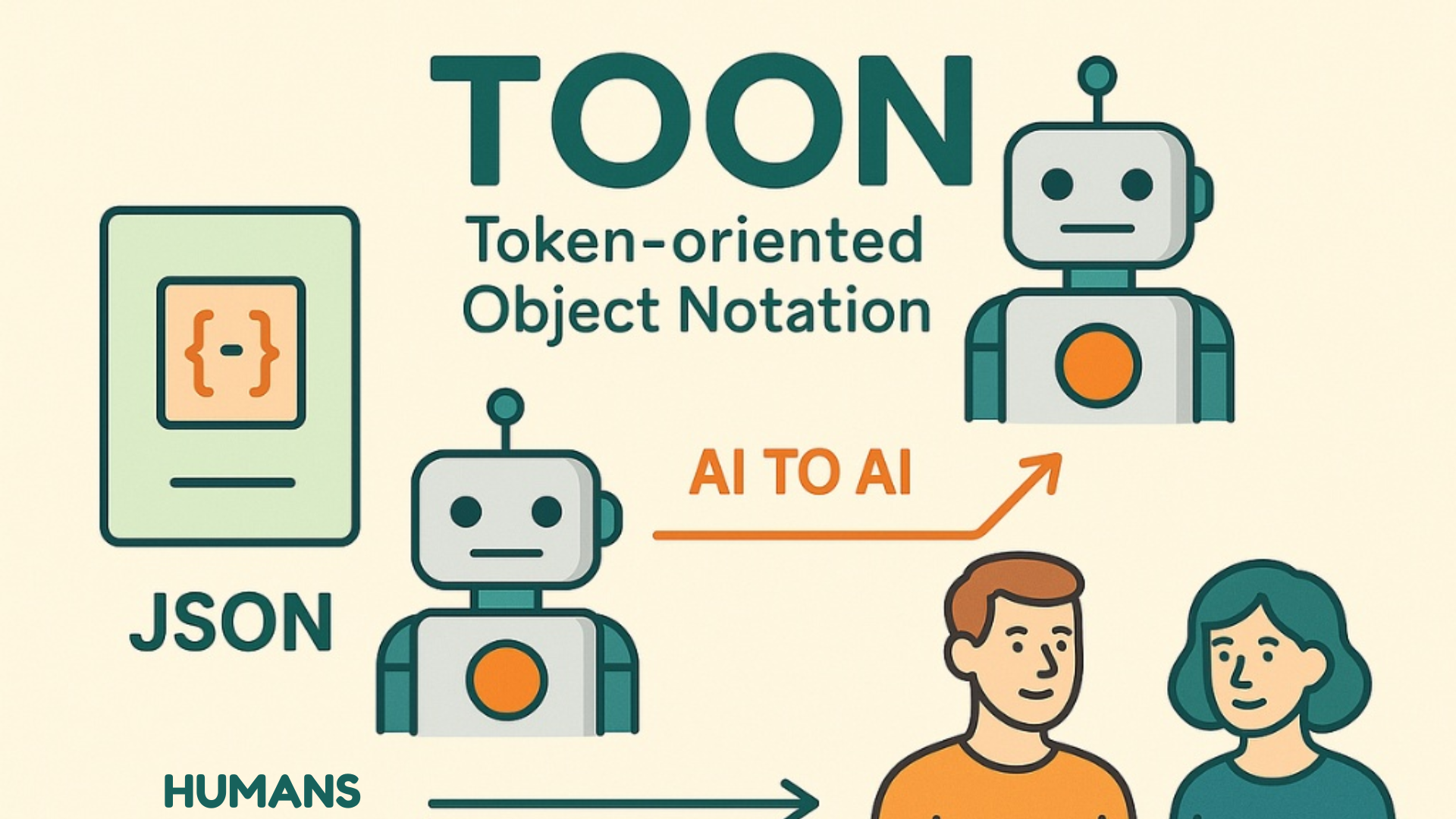

ToggleTOON (Token-Oriented Object Notation): A Token-Efficient Alternative to JSON for the AI Era

For years, JSON (JavaScript Object Notation) has been the backbone of modern data exchange. It is reliable, widely adopted, and easy for machines to parse.

From APIs and automation workflows to configuration files and web applications, JSON is everywhere — and for good reason.

But the way we use data today has evolved.

Modern systems increasingly rely on AI models, LLM-powered applications, and evolving technologies — making it essential to build future-ready technical skills.

JSON was designed for readability and structure, not token efficiency. When working with large language models, repeated keys, quotation marks, and structural syntax can significantly increase token usage — which directly affects cost, latency, and scalability.

This is where a new concept emerges: TOON.

What Is TOON?

TOON (Token-Oriented Object Notation) is a lightweight data representation format designed specifically for token efficiency in AI-driven systems.

Its core philosophy is simple:

Fewer characters → fewer tokens → lower cost → faster processing.

TOON reduces structural overhead while preserving the meaning and structure of data. It is designed to work particularly well in environments where token usage matters, such as:

- AI prompt engineering

- LLM-to-LLM communication

- automation workflows

- high-volume APIs

- latency-sensitive systems

Instead of prioritizing human readability alone, TOON prioritizes efficient communication between humans, machines, and AI models.

Why Token Efficiency Matters

In traditional software systems, extra characters rarely matter.

But in AI systems, tokens are a measurable resource.

Every prompt, response, and structured payload processed by an AI model is counted in tokens. These tokens directly impact:

- API costs

- response latency

- context window limits

- system scalability

JSON was never designed with these constraints in mind.

Repeated key names, quotation marks, commas, and braces add structural overhead that increases token usage without adding meaningful semantic value.

At a small scale this overhead is negligible.

At AI scale, it becomes expensive.

TOON aims to reduce that unnecessary overhead.

What Changes with TOON?

TOON preserves the intent of structured data while dramatically reducing verbosity.

The idea is not to remove structure — but to compress how that structure is expressed.

For example, JSON often represents states with explicit key-value pairs:

“active”: true

In TOON, the same intent can be expressed with semantic shorthand:

active+

This approach reduces characters, simplifies payloads, and decreases token consumption — particularly in large prompt structures.

The result is:

- smaller payloads

- faster processing

- lower AI costs

Example: JSON vs TOON

Consider a simple user object.

JSON

{

"user": "Alex",

"role": "admin",

"active": true

}TOON

user:Alex role:admin active+Both formats express the same information.

However, TOON reduces structural syntax and repetition, making it more compact — which can translate into lower token usage when used inside AI prompts or model communication layers.

Design Principles Behind TOON

TOON is built around a few key principles that focus on efficiency and predictability.

Token-First Design

Every symbol and separator is chosen to minimize token usage while preserving clarity.

Semantic Compression

Expressive shorthand communicates intent with fewer characters while keeping meaning intact.

Predictable Structure

Strict structural rules allow TOON to be parsed, validated, and transformed reliably.

JSON Compatibility

TOON is not meant to replace JSON. It can convert cleanly back into JSON and integrate with existing schemas and systems.

Where TOON Fits Best

TOON is most useful in environments where token efficiency and system performance matter.

AI & LLM Workflows

Prompt payloads, memory serialization, tool inputs, and AI-to-AI communication.

APIs and Microservices

Reduced payload size can improve transfer speed and reduce bandwidth usage.

Automation Platforms

Simplified internal representations make rule engines and workflows easier to manage.

Documentation and Internal Systems

Less visual noise can make structured information easier to read and maintain.

Is TOON Meant to Replace JSON?

No — and it should not.

JSON remains the industry standard for data storage, interoperability, and public APIs.

TOON is better viewed as an abstraction layer designed for token-efficient environments, particularly where AI systems interact with structured data.

In many implementations, TOON would act as a compressed representation that can translate into JSON when required.

This approach allows systems to maintain compatibility while benefiting from improved efficiency.

Final Thought

As AI systems continue to scale, efficiency becomes a core design requirement rather than an optimization.

Data structures that were designed for human readability alone may not be ideal for token-priced environments.

TOON does not challenge JSON’s dominance.

Instead, it complements it by aligning data representation with the realities of modern AI systems — where every token has a cost.

In a world increasingly powered by LLMs, token-efficient data formats may become an important part of system design.